Two weeks ago, I shared the first version of HASHI — a privacy-first bridge that let you chat with multiple AI agents through a single WhatsApp or Telegram account. It was Version 1.0: functional and fun.

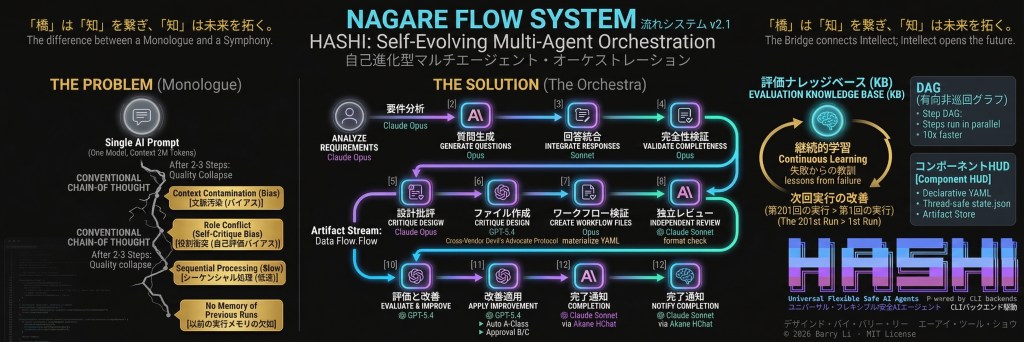

Today, I am releasing HASHI v2.1, and I genuinely struggle to describe how much has changed. If v1 was a bridge for conversations, v2.1 is a self-evolving multi-agent orchestration platform — one that can design its own workflows, critique its own designs using a different AI vendor, learn from every run, and recover from failures automatically.

(illustration genrated by AI)

Let me walk you through what happened.

The v2.0 Foundation: Agents That Can Actually Do Things

Before we get to the headline feature, let me cover the v2.0 upgrades that made v2.1 possible. These shipped over the past two weeks:

🔧 Tool Execution Layer (11 Local Tools)

In v1, OpenRouter-backed agents could only talk. They could write beautiful prose about editing a file, but they couldn’t actually touch one. That’s fixed now.

Every OpenRouter agent can now execute 11 built-in tools: run shell commands, read and write files, apply patches, search the web (via Brave API), fetch URLs, make HTTP requests, list and kill processes, and even send Telegram messages. The bridge handles the tool loop — the model proposes a tool call, HASHI executes it locally, returns the result, and the model continues. Up to 15 iterations per turn.

This single change transformed HASHI from “a thing you talk to” into “a thing that gets stuff done.”

🌐 Browser Automation

All agents — regardless of backend — can now control a real web browser via Playwright. Six actions: screenshot, get text, get HTML, click elements, fill forms, and run arbitrary JavaScript. Two modes: standalone headless Chromium, or CDP mode that attaches to your already-running Chrome with all your cookies and sessions intact.

My agents use this daily to check dashboards, scrape pages, and interact with web apps that don’t have APIs.

💾 Pack & Go: USB Zero-Install Deployment

This one I’m particularly proud of. Run prepare_usb.bat (Windows) or prepare_usb.sh (macOS) on any machine with internet. It downloads an embedded Python runtime, installs all dependencies, and packages everything onto a USB drive. Hand that USB to anyone — they double-click a launcher and HASHI runs. No Python installation, no pip, no terminal, nothing.

I built this because I wanted to share HASHI with people who have never opened a command line in their lives. It works.

📺 TUI: Terminal Interface

Not everyone wants a browser open. tui.py gives you a split-panel terminal UI built with Textual — log stream on top (~80%), chat input on the bottom (~20%), status bar showing current agent and backend. It connects to the same orchestrator, so Telegram messages and TUI messages share the same session.

🧠 Vector Memory

HASHI now embeds conversation turns and memories using BGE-M3 (local ONNX inference, no API calls) and stores them in bridge_memory.sqlite with sqlite-vec for cosine similarity search. When you send a message, the bridge vectorizes it, retrieves the top-K most relevant memories, and injects them into the prompt. Your agents remember things without you having to remind them.

Other v2.0 Additions

- Flex/Fixed Backend Switching —

/backendswitches between CLI and OpenRouter mid-conversation. No session restart needed. - Workbench Web UI — React + Vite local interface for multi-agent chat.

/dreamSkill — Nightly AI memory consolidation. Your agent “sleeps,” reviews the day’s transcript, extracts important memories, and optionally updates its own personality file. Includes snapshot-based undo for morning rollback.- Process-Tree Stop —

/stopnow kills the entire subprocess tree usingos.killpg(). No more zombie Node.js workers holding pipes open. /retryPersistence — Resend your last prompt or re-run the agent’s last response./memoryCommand — Surgical memory control: pause injection, wipe stored data, check status.

The Main Event: Nagare Flow System (v2.1)

Everything above was the foundation. Now for the part that changes the game entirely.

Nagare (流れ, Japanese for “flow”) is HASHI’s multi-agent workflow orchestration engine. It coordinates multiple AI agents — potentially from different vendors — through a declarative pipeline, producing work that no single agent or prompt chain could achieve.

Why Does This Exist?

Every AI model, no matter how capable, operates inside a single reasoning session. Within that session, it cannot:

- Run parallel sub-tasks with true separation of concerns

- Call itself with a fresh perspective to critique its own output

- Remember lessons from previous runs

- Escalate only when necessary without pausing the whole conversation

For any task requiring more than 2-3 coherent reasoning steps, quality collapses. A brilliant translation model becomes inconsistent across chapters. A capable code writer misses cross-file implications. A thorough analyst ignores its own contradictions.

Nagare solves this at the architecture level — not by making a bigger model, but by coordinating many focused agents, each excellent at their narrow role.

The 12-Step Meta-Workflow

Here’s the killer feature: describe a task in natural language, and Nagare designs a complete multi-agent workflow for it automatically.

Say you tell it: “I want a workflow that takes academic papers, extracts key claims, searches for contradicting evidence, and writes a critical analysis report.”

Nagare’s meta-workflow will:

- Analyze requirements (Claude Opus) — deep task decomposition

- Generate pre-flight questions (Claude Opus) — score each question on necessity × impact × clarity; only ask you the top 5

- Integrate your answers (Claude Sonnet) — merge human input with smart defaults

- Validate completeness (Claude Opus) — ensure nothing is missing before proceeding

- Design the workflow (Claude Opus) — full YAML + DAG + rationale

- Critique the design (GPT-5.4) — a Devil’s Advocate from a different AI vendor challenges every assumption

- Create workflow files (Claude Opus) — materialize the validated design

- Validate the YAML (Claude Sonnet) — format and schema check

- Independent review (GPT-5.4) — cross-vendor audit

- Evaluate and improve (GPT-5.4) — quality scoring + Knowledge Base update

- Apply improvements (GPT-5.4) — low-risk fixes auto-applied; high-risk queued for approval

- Notify completion (Claude Sonnet) — push notification with results

The entire pipeline runs in the background. You get notified when it’s done.

Cross-Vendor Anti-Bias: Why This Matters

This is the design decision I’m most proud of: Claude never evaluates Claude.

When a model writes something and then reviews it in the same session, it has already “committed” to its choices. Its review is biased. Nagare architecturally enforces independence: Claude designs, GPT critiques. Claude generates, GPT audits. This isn’t a convention you can forget to follow — it’s how the system is wired.

I haven’t seen any other open-source project do this systematically.

Pre-Flight: Ask Everything Once, Then Run Clean

Most AI workflows either require constant babysitting or make assumptions without asking. Nagare’s pre-flight system does something different:

- Categorizes every unknown into three layers: design-time (must ask human), runtime (collected when the generated workflow runs), and implementation detail (use a smart default)

- Scores each question on a 3-dimensional scale and filters to maximum 5 questions

- If you don’t respond within 5 minutes, smart defaults kick in automatically

Once confirmed, the workflow runs uninterrupted. No mid-task “hey, what did you mean by…?” interruptions.

Self-Improving: The Evaluation Knowledge Base

Every workflow run feeds lessons back into an Evaluation Knowledge Base:

- What patterns worked

- What failures occurred

- Model performance benchmarks per task type

- Improvement proposals with confidence scores

Improvements are classified into three risk tiers:

| Class | Risk | Action | Examples |

|---|---|---|---|

| A | Low | Auto-applied | Prompt rewording, timeout tweaks |

| B | Medium | Needs approval | Agent role changes, model substitution |

| C | High | Needs approval | New agents, DAG restructuring |

The 201st workflow run is genuinely better than the 1st — because the previous 200 taught the system what works.

Crash Recovery and Debug Agents

Nagare uses atomic state persistence — write to tmp → fsync → rename — so if your machine crashes mid-workflow, you resume at the exact step that was interrupted, without re-running completed work.

When a step fails, a Debug Agent automatically analyzes the failure and retries with an adjusted prompt, up to 3 times. Only after 3 failures does it escalate to a human. In practice, most transient errors self-recover.

The Big Picture: Three Generations in Two Weeks

| Version | What It Was | Released |

|---|---|---|

| v1.0 | A chat bridge — talk to AI agents via WhatsApp/Telegram | Mar 15 |

| v2.0 | A tool platform — agents that can take real actions locally | Mar 23 |

| v2.1 | A self-evolving orchestration engine — agents that design, critique, and improve their own workflows | Mar 28 |

Each version didn’t just add features — it changed what the system fundamentally is.

Get Started

HASHI is open source under the MIT License.

- GitHub: github.com/Bazza1982/HASHI

- Requirements: Python 3.10+ and at least one AI backend (Claude CLI, Gemini CLI, Codex CLI, or an OpenRouter API key)

- Quick start: Clone →

pip install -r requirements.txt→python onboarding/onboarding_main.py - USB deployment: Run

prepare_usb.bat/prepare_usb.sh→ hand the USB to anyone

Honest Disclaimer

This is still a prototype built through vibe-coding. I’m a PhD candidate in sustainability assurance, not a software engineer. Every line of code was written by AI (Claude, Gemini, Codex) and cross-reviewed by AI, with me directing the architecture and making judgment calls.

It works. I use it every day — my agents check my email, manage my calendar, write code, and now orchestrate multi-step workflows autonomously. But expect edge cases, cryptic error messages, and the occasional surprise.

If you find bugs, the Issues page is always open.

Nagare — because the most capable model and the cleverest prompt are still just one voice. Orchestration is the difference between a monologue and a symphony.

Built with Vision. Written by AI. Directed by Human.